|

Written By:

Date Posted: March 8, 2002

With the announcement and release of the GeForce 4, many of you are probably saving your pennies towards it. No doubt, the performance and features are impressive, but what about those who don't have the cash for nVidia's latest and greatest? You can get the castrated GF4MX now, but simply put, from what I've seen, you're better off with a GeForce 3 for now. Speaking of which, price drops were seen on all the GeForce 3 cards since the GF4 announcement, and we picked up the top of the line Visiontek 6964 GF3 Ti500.

I can't comment honestly on it's performance against the GF4 Ti4600, but you can read more about GF4 technology at , which had the best technology profile of the GF4 thus far. We'll compare it with some other popular cards, and test it's performance with the games of today. I'm not going to go into a whole lot of detail about GeForce 3 technology, as that was more or less covered in dsp's Visiontek 6564 review, and my MSI StarForce 822 review.

Specifications

Graphics Chipset: GeForce3 Ti 500

Graphics Core: 240MHz, 256-bit 2D/3D GPU

Memory/Interface: 64MB DDR/128-bit wide

Memory Clock: 500 MHz SDR Equivalent

Memory Bandwidth: 8.0 GB/sec.

Fill Rate (texels): 1920M/sec.

2D/3D resolution (max): 2048 x 1536 @ 75Hz

Shadow Buffers

Enables characters and objects to cast shadows on themselves (self-shadowing) and softens the edges of shadows to maximize the realism and impact on the viewer.

3D Textures

Creates material properties that cut through objects.

nfiniteFX" Engine

Enables dynamic breakthrough effects, which deliver the next leap into realism 3D graphics.

Vertex & Pixel Shaders

Adds personality and ambience, like facial expressions and surface textures simulating reality.

Lightspeed Memory Architecture"

Ensures peak performance while generating earth-shattering effects at allresolutions.

High-Resolution Antialiasing (HRAA)

Delivers a high level of detail and crisp, clean lines at high frame rates featuring Quincunx AA mode.

High-Definition Video Processor (HDVP)

Turns your PC into a full-quality DVD player.

VGA / TV / S-Video Out / DVI-I Connectors

Gives end-users the option of big-screen gaming on a TV, on a regular monitor, or with a digital flat panel monitor. The GeForce3 Ti 500 GPU supports DirectX 8.1 features and special effects for the ultimate 3D experience.

AGP 4X/2X, AGP Texturing Support

Takes advantage of new methods of transferring 32-bit color textures and high-polygon-count scenes.

Microsoft" DirectX" and OpenGL" Optimizations and Support

Delivers the best performance and guarantees compatibility with all current and future applications and games.

Unified Driver Support: Guarantees forward and backward compatibility.

Minimum System Requirements

" AMD K6-2, Intel Pentium II class processor, or higher

" 64MB RAM

" AGP 2.0 Compliant Socket

" A CD-ROM drive

" 10MB available disk space

" Windows 95OSR2 (or higher) or LINUX

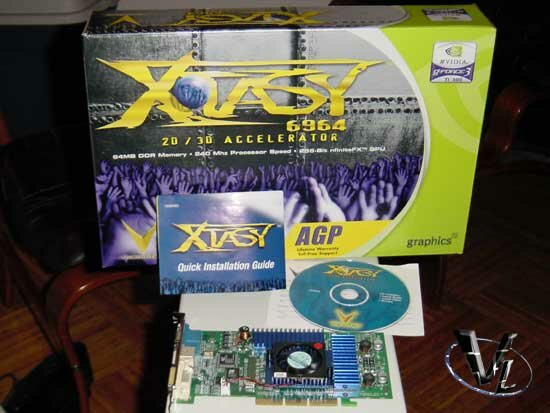

Like pretty much every other video card you buy, you get the card, instructions and some drivers. There's not much else included, which I thought was a shame. Typically, I consider game bundles nothing more than fillers, but considering the cost of the GeForce 3 Ti500 (at the time of release, as the bundle hasn't changed since), you'd think at least DVD software or something would be included. The driver CD is a throwaway, as nothing is really customized from nVidia's reference drivers, and they are outdated.

Here's a closer shot of the card. The heatsinks are blue, which looks very nice, but other than that, there isn't anything particularly remarkable.

A closer shot of the heatsink/fan combo. For those of you who aren't careful, you better be since there's no fan guard included. I doubt this will be an issue since it faces the first PCI slot, away from most wires and fingers. The fan cools the GeForce 3 GPU, which runs at a stock speed of 240MHz. Performance is adaquate, as we were able to overclock the core slightly, but if this is what you plan to do, I suggest a Chrorb. With stock cooling, the GPU averaged about 59°C.

One thing to point out about our card, which may or may not be a problem for some of you, is that the heatsink and fan was detached from the card. Yes folks, we needed to install it after opening up the package. For the DIY crowd, this will save you the hassle of removing it for a beefier GPU cooler. For everyone else, don't worry, it's easy to install. Just get rid of the TIM though, and spread some real compound on it.

If you turn your head upside down, you can also see the Conexant Bt869 chip used for TV-Out duties. The quality was acceptable, at least, no worse than I experienced with other GeForce cards.

Being the top of the line card (the imminent release of Visiontek's GeForce 4 Ti4600 notwithstanding), you have a plethora of output options available.

Outside of the standard 15 pin VGA connection, you have the TV-out and a Digital output connector should you need it.

Like the GPU heatsink, you have nVidia reference heatsinks for the ram, but in blue. The ram itself runs at 250MHz. The effective speed of the ram is actually 500MHz, when you consider that DDR ram is double clocked. We were a little disturbed that the ram sinks didn't seem to really have any thermal interface. They seemed (and verified after yanking them off) to be only glued with dabs of whatever they used. I suggest that if you're going to be pusing this card, invest in some thermal adhesive, or at least some frag tape.

I'm including a brief summary of the GeForce 3 technology, since a few of you might want a refresher. Skip ahead if you don't need this.

The nfiniteFX Engine

"With the nfiniteFX engine's programmability, games and other graphics-intensive applications can offer more exciting and stylized visual effects. Vertex and Pixel Shaders are two patented architectural advancements that allow for a multitude of effects."

What the nfiniteFX Engine does is that now developers can code various special effects however they want since the new GPU is fully programmable. They're no longer limited by a set of coding rules imposed by the GPU, and what this will mean is there will be photorealistic games, especially those coded withen the specifications of Direct X 8, which the GeForce 3 is fully compliant. There are two main components that make up the nfiniteFX Engine, vertex and pixal shaders. According to nVidia's description, a vertex is the corner where two edges of a triangle meet. Because polygons are made up of triangles, being able to change their values will create a more realistic scene. The is a great example of vertex shaders in action. Pixal shaders is used to create textures and surfaces. Rather than placing a bitmap on an object, pixal shaders can modify values on a per pixal basis. This makes for, again, more realistic images. The shows this feature off.

Lightspeed Memory Architecture

"The Lightspeed Memory Architecture brings power to the GeForce3. That's why the NVIDIA GeForce3 is the platform of choice for the Microsoft® DirectX® 8 application program interface (API), and the technology foundation for the Microsoft next generation game console, Xbox"."

Memory performance has always been an issue with previous GeForce video cards, as well as most other manufacturers as well. If the GPU is pumping out more information than the video ram can take in, a bottleneck occurs. The Lightspeed Memory Architecture is designed to address this issue. According to nVidia, by means of a crossbar-based memory controller, the GeForce 3 avoids bombarding the AGP bus with texture information and makes it much more efficient, by using twice the available memory bandwidth. Previous GeForce cards wasted bandwidth by not using all of the available bandwidth. Think of it like Fat16 and Fat32, where Fat32 is more efficient. Ok, that was a poor comparison, but that's the general idea.

Back earlier in the StarForce review, I mention that at 1024x768 resolutions and lower, the GF2 Ultra tends to be faster than the GF3. The reason for this is that these low resolutions don't tax the memory of the GeForce cards. Therefore, the GF2 raw core speed is pumping out the data, and it is 50mhz faster than stock GF3s. This has changed a little now, as the GeForce 3 Ti500 is clearly faster than both cards at all resolutions. At higher resolutions for the original GeForce 3 and GeForce 2, the memory will start choking at the amount of data flooding in. This is where you'll see the immediate benefits of the Lightspeed Memory Architecture, as it handles the extra data with aplomb. Having a speedy subsystem will also help.

High-resolution Antialiasing (HRAA)

NVIDIA's patented high-resolution antialiasing (HRAA) generates high-performance samples at nearly four times the rate of GeForce2 Ultra, while delivering the industry's best visual quality.

3dfx pimped antialiasing (AA) with their last Voodoo cards, and nVidia has taken it a step further with the GeForce 3. AA gaming is becoming more of a reality now, since previous AA implementations were slow. Image quality wise, I don't notice nVidia's HRAA to be any better than their standard 2x FSAA. Speed was close, as the difference between the two was hardly noticable.

Unlike previous GF2 cards, I feel that FSAA is very playable right now, but I still wouldn't use it at 1280 x 1024 resolution and up. Sure, the benchmarks look ok, but in reality, there will be too many peaks and valleys in terms of framerates when playing an intense action game. Although I didn't benchmark it, I was surprised Max Payne ran great at 1600 x 1200, with HRAA on. Don't ask me why I did that, I just wanted to see. It was completely playable, even in the big gun fights, and looked fantastic.

Personally, I prefer playing at a higher resolution, with any anti-aliasing turned off. Max Payne is a slower game (movement that is) so it wasn't an issue with AA on, but since my gaming preferences revolves around Quake engines, framerates are more important than pretty, blurry pixels.

If you want more information on these topics, I fully recommend reading the GeForce 3 technology guides at , and at . Those sites will be able to explain it a lot better than I will.

Overclocking

With the Ti500 already being highly clocked, we kind of wondered what the point would be. Since many people do this, we'd figure we'll let you know how it did. Out of the box drivers and the ones available online don't allow for any overclocking. This won't really be a problem since we'll just use the . You can also use Powerstrip, but us gimps prefer free stuff, so coolbits or the are our choices. Both are available at the .

I didn't have time to test it out with the Crystal Orb, but we did use the AGP Airlift, and I turned on all my case fans to move the air through the system. I have a nice 120mm blowhole resting above the AGP/PCI slots, so I don't think a lack of cooling will be an issue.

Ok, maybe the cooling could have been better. We only managed a mere 9MHz core overclock, but we got a decent 68MHz overclock. I'm pretty sure the stock cooling held it back. We went for a 260/570 initially, and were greeted with a nice blank screen. Even though we chose to "Test New Settings", the system wouldn't seem to recover. Some of the higher overclocked settings worked ok, but we did experience lockups occasionally. We settled for 249/568 for now.

Benchmarking

Since it wouldn't be a fair test, I've dropped the GeForce 2 from our benchmarks. If the GF2 numbers are important, please refer to our MSI StarForce review. Here's our test bed:

Athlon XP 1800+ (stock speed), 512MB (2 Dimms), Asus A7V266-E, Visiontek XTasy 6964, Windows XP Professional, Detonator 23.11, VIA 4 in 1 v4.35a.

We performed a clean install of the OS (thank goodness for Symantec Ghost), and ran our standard drivers which we've used in the past. We also watched for stability issues and image quality.

We'll be presenting the following benchmarks in order:

Quake 3 Arena

Return to Castle Wolfenstein

3D Mark 2001

Unreal Tournament

Serious Sam

Max Payne

Although the main numbers will be stock speed benchmarks, we will also provide some overclocked results. A description of the benchmarks will be provided as we move on.

Next Page

|